DeepGlobe Road Extraction - Challenge

an end-to-end deep learning case study

Photo by Jaromír Kavan on Unsplash

Introduction

The Geoscience and Remote Sensing Society - one of the well-known communities to learn and contribute to Geospatial Science has sponsored the DeepGlobe machine vision challenge in 2018, which includes the deep analysis of satellite images of Earth.

The 3 challenges are -

- Road Extraction

- Building Detection

- Land Cover Classification

As part of this, I picked up the problem of Road Extraction as roads have always been a crucial part in various aspects be it transportation, traffic management, city planning, road monitoring, GPS navigation, etc.

The challenges of DeepGlobe are purely research-based and focus on the real problems.

DL Problem Formulation

Data Overview

In total, we have

14796images. Out of which6226→ training satellite images6226→ training mask images1243→ validation satellite images1101→ test satellite images

Validation and test data sets do not contain respective mask images. This is something we need to predict.

Problem Type

If we observe the masks, there are

2classes. Therefore, it is a binary image segmentation task.This task is a computer vision task wherein we are dealing with images (satellite images).

Performance Metric

Since it is an image segmentation task, the metrics that we stick to are -

IoU Score - the area of the overlap between the predicted segmentation and the ground truth divided by the area of union between the predicted segmentation and the ground truth.

0IoU means there is no overlap between the predicted segmentation and the ground truth.1IoU means the predicted segmentation and ground truth are exactly identical.

Accuracy - it is the ratio of correct predictions to the total number of input samples. The one caveat here is that we need to have an equal number of classes to consider this metric.

Data Download

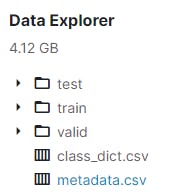

The dataset memory consumption is around

4GB. We can download this dataset easily by using Kaggle APIs or CURL WGET chrome extension that takes less time compared with the regular download.For convenience, I have already downloaded it to my system. The structure of the data after extracting the files is as follows.

metadata.csvfile contains image paths for all training, validation, and test sets.

Exploratory Data Analysis

Originally, the size of each image is

(1024, 1024). If we train the model considering this size, it would end up having more trainable parameters. We need to make sure the image sizes are small and have uniformity in them.It is also mentioned that some of the training masks are not binary images. A binary is an image whose pixels are either

0or255. To maintain uniformity, we need to binarize the training masks with the help of theOpenCVpackage.

Binarization

To know more about image binarization, you can feel free to check my blog.

The outputs are as follows.

Image Resizing

The ideal shape of an image that we want is (256, 256). We need to resize all the images in training, validation, and test sets.

Some practitioners also consider the shape of the images to be

(128, 128).

Training Images

Let's visualize a sample of training data that includes both satellite image and its respective mask image.

By just observing this sample data, we can tell that this task is limited to extracting the larger paths which are clearly visible.

Validation Images

It would have been perfect if there were validation mask images so that it could become easy to track the models' performance. Unfortunately, we are not provided the validation data with the mask images.

To subsidize this problem, we can take

1%of training data as validation and train the models'.

This sample set contains a mix of larger paths and thinner paths.

Test Images

The test dataset does not contain the targets or mask images. This is something we need to predict. Let's visualize the same.

This sample set contains a mix of larger paths and thinner paths.

This ends our EDA. However, if you would like to know the complete details with all the code, you can check my notebook hosted on the Jovian platform.

Transfer Learning

Transfer learning is a part of ML and DL problems wherein the model's knowledge that has been trained in solving one problem is stored and further used for solving another problem that is quite similar.

The main advantage of transfer learning is that we do not have to train the models from scratch and rather use the pre-trained ones. Tensorflow by Google is a handy library for implementing deep learning models. It also includes various pre-trained models that can solve any problem statements with slight tweaks in the model's architecture.

We are dividing this modeling (transfer learning) part into 2 aspects.

No Augmentation

ResNet34

The below output is the validation data prediction. The rightmost image is the prediction mask and we can observe that for those images that contain larger paths, the model is able to extract the path but not as efficiently as it needs to be.

The below output is the test data prediction. The right image is the prediction mask. As usual, the model only identifies the larger paths but not the thinner paths. We can also see some incomplete paths that have been predicted.

VGG16

The below output is the validation data prediction. The rightmost image is the prediction mask and we can observe that the performance is quite similar to the previous (ResNet34) model. Complex patterns of the paths where there are bushes covered are not identified properly.

The below output is the test data prediction. The right image is the prediction mask. As usual, the model only identifies the larger paths but not the thinner paths. We can also see some incomplete paths that have been predicted.

MobileNet

The below output is the validation data prediction. The rightmost image is the prediction mask and we can observe that for those images that contain larger paths, the model is able to extract the path but not as efficiently as it needs to be.

The below output is the test data prediction. The right image is the prediction mask. The model's performance is not up to the mark when compared to the above previous models.

Inception

The below output is the validation data prediction. The rightmost image is the prediction mask and we can observe that for those images that contain larger paths, the model is able to extract the path but not as efficiently as it needs to be.

For the complex patterns, the model doesn't perform well. But it does a pretty good job in identifying the thinner patterns which are clear.

The below output is the test data prediction. The right image is the prediction mask. For some patterns, it does a good job which cannot be identified by ResNet34 or VGG16.

ResNet50

The below output is the validation data prediction. The rightmost image is the prediction mask. It was only able to extract the larger paths that looked very clear.

Test data prediction.

Augmentation

I have trained the same list of models by slightly augmenting the data. The results are not up to the mark.

Model Performance

Comparing the results in order to select the best model.

The results of the augmented data are not so good compared with the original data (no augmentation). Clearly, it can be noted by checking the performances of the various models.

In fact the masks obtained for the augmented data are not as clear as the masks obtained for the original (no augmentation) data.

Hence, it is not a valid decision to consider the models that have been trained on the augmented data.

The only option left now is to select the best model out of other models that have been trained on the original (no augmentation) data.

The best model(s) from all the above models are

ResNet34,VGG16, andInception.

Feel free to check my notebook for transfer learning hosted on the Jovian platform.

U-NET Model

Apart from implementing transfer learning models, I also wanted to cross-check the performance by implementing a famous deep learning algorithm called U-NET that is mostly used for image segmentation tasks.

The performance of the U-NET model is significantly better than the transfer learning. As usual, we shall divide this into 2 aspects.

No Augmentation

The below output is the validation data prediction. The rightmost image is the prediction mask and we can observe that the model is able to extract most of the paths (including thinner paths).

Even the paths that have not been highlighted in the original mask, are still being identified with the help of this model.

Test data prediction.

Augmentation

In the case of data augmentation, the model still performs its best. Augmentation has been helpful to identify complex patterns. Results of the validation prediction can be seen below.

Test data prediction.

Model Performance

The above are the results obtained by the U-NET model developed from scratch.

The left image corresponds to the results of the model that is trained on the original (no augmentation) data.

The right image corresponds to the results of the model that is trained on the original (augmented) data.

Clearly by observing the graphs, we can say that the left one (model) shows slight overfitting when compared with the right one (model). Although, it seems the difference is negligible.

Personally, I feel that the model trained on the augmented data does show some closer predictions. This is something we should observe in the process of error analysis.

Feel free to check my notebook for U-NET modeling hosted on the Jovian platform.

Error Analysis

Error analysis is very much helpful (in case, when we have multiple models) in order to decide which model is the best model and which is not. This is a stage where we can know how we should be improving the model or the dataset.

In total, we have 6 models that have been trained on both augmented data and non-augmented data. The IoU score is the performance metric that we have considered. Based on my observation, U-NET that is trained on non-augmented data happens to be the best model since it has the highest IoU score.

The error analysis has to be performed on sample data. But if you do have time and efficient system requirements you can also consider the whole dataset. For this analysis, I have considered only 100 random images.

Feel free to check my notebook for deep error analysis hosted on the Jovian platform.

Single Image Segmentation

As part of this, we need to extract the road path when passed a single satellite image as input to the model. This is important in real-life scenarios as we do not generally pass the entire test set to the model. Instead of getting the prediction mask, it is always better to highlight the road path on the satellite image itself. We can highlight the path by any color.

Once we have the model loaded, we can always pass a single image for getting the route. Upon getting the prediction mask, with the help of simple image processing techniques, we can still retain the background and only highlight the path.

Hence, the below function.

No Augmentation

100034_sat

100393_sat

Augmentation

100034_sat

100393_sat

UI Part

Video Demonstration

Jovian Project Link → jovian.ai/msameeruddin/collections/deep-glo..

References

- appliedaicourse.com

- youtu.be/GAYJ81M58y8

- cs.toronto.edu/~urtasun/publications/mattyu..

- arxiv.org/pdf/1908.08223.pdf

- kaggle.com/vanvalkenberg/road-maps-from-aer..

- kaggle.com/balraj98/road-extraction-from-sa..

Well, that's it from my end. Make sure to subscribe to my newsletter to never miss an update from the exclusive content that I post.